Live Inference Benchmark

Real Interactive Demo

See how much faster we are

Directly test latency and reasoning capabilities across leading foundation models running on our optimized infrastructure.

Our Inference

Ready to compare

Competitor Cloud

Ready to compare

Try preset prompts or enter your own to compare inference speed in real-time

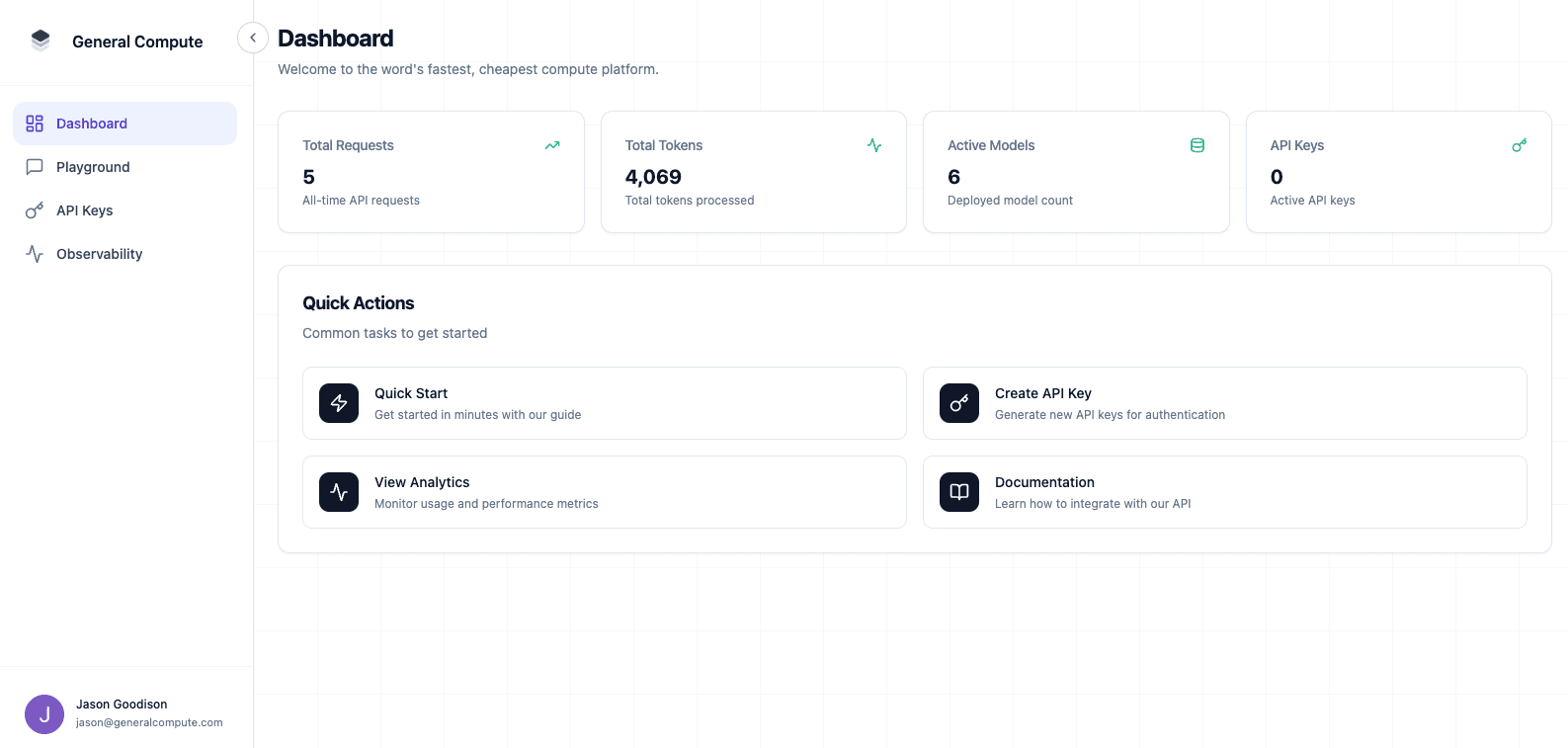

What General Compute Builds

We build a neocloud platform powered by Cerebras and next-generation compute. Deploy coding agents, voice AI on glm-4.7, gpt-oss-120b, and more — or bring your own model with our inference-optimized infrastructure built for speed and scale.

Build the Future with Us

We're actively onboarding governments, enterprises, and startups. Get in touch to discuss compute needs, partnerships, or investment.